AI at Work: Hype vs. Orderly Impact

AI won’t save a chaotic process—but it will ruthlessly accelerate one. This piece cuts through the vendor hype to show business leaders how to measure real AI effectiveness, avoid costly traps, and integrate machine intelligence into systems that already work.

The Reality of AI Effectiveness in the Modern Workplace

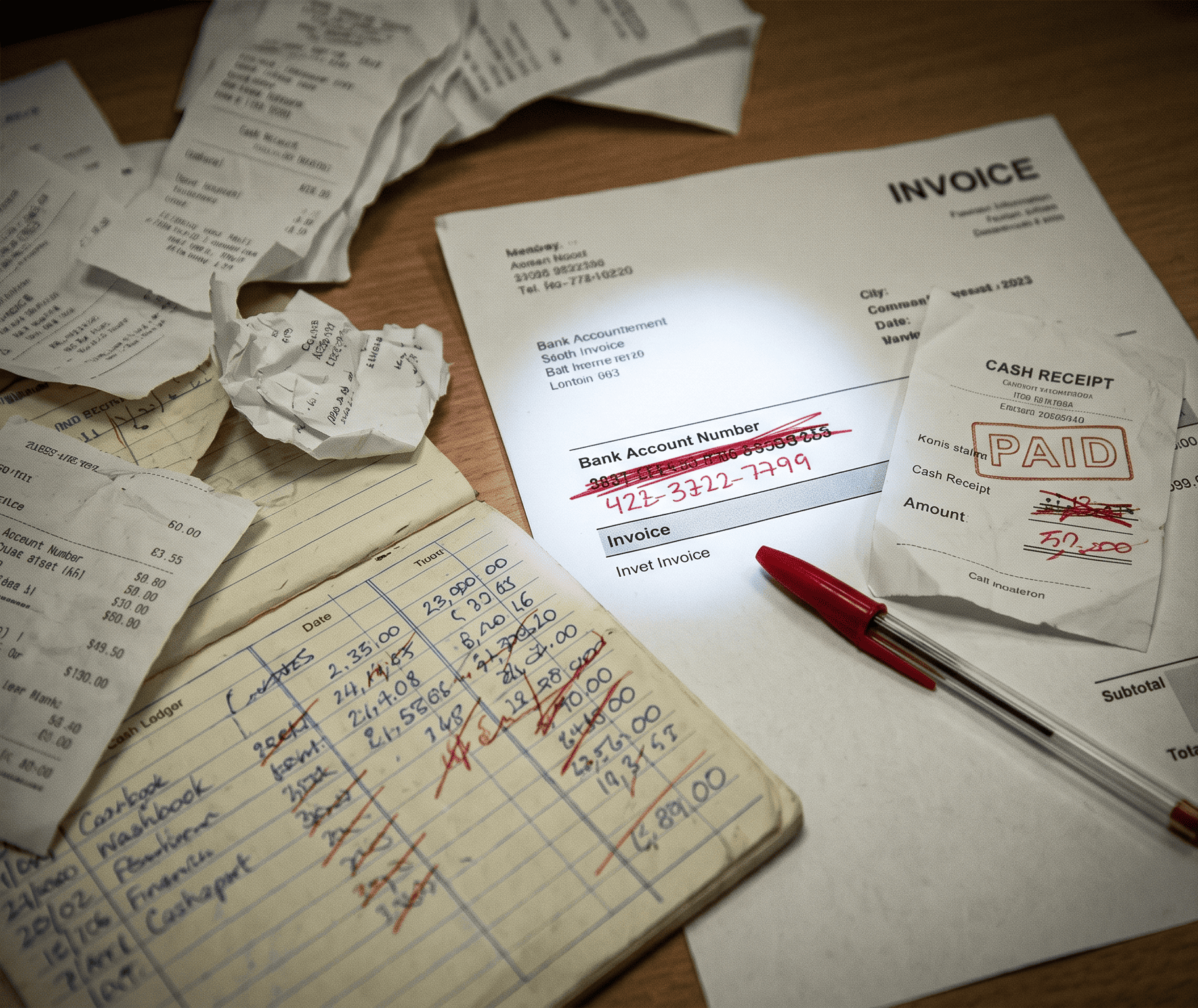

Artificial intelligence is no longer a promise. It’s a spreadsheet line item, a Slack integration, and a quiet ghost in the CRM. But here’s what most vendors won’t tell you: AI doesn’t fix broken workflows. It just finishes breaking them faster.

At Orderly Problem Solvers (OPS), we’ve watched dozens of organizations trip over the same illusion—believing that dropping an LLM or a predictive model into a messy process will somehow clean it up. It won’t. What AI does is expose inefficiency with surgical precision. And if you’re not ready for that exposure, you’re not ready for AI.

“AI is not a strategy. It’s a force multiplier for the strategy you already have—or don’t have.”

— OPS internal principle

Let’s walk through what AI effectiveness actually looks like in the modern workplace, without the fog machines.

The Three Realities Leaders Keep Missing

1. AI amplifies your existing order (or disorder)

If your team already uses clear naming conventions, standardized intake forms, and documented approval flows, AI will make those steps nearly invisible. If you don’t? AI will hallucinate, duplicate, and route decisions into black holes—faster than any human ever could.

2. ROI follows process hygiene, not model complexity

The companies seeing 30%+ time savings aren’t using custom-trained GPT-7s. They’re using basic classification models on clean, labeled data. Hygiene before heroics.

3. The biggest risk isn’t bias or job loss—it’s silent failure

Most AI mistakes today aren’t dramatic. They’re subtle: an auto-tagged expense report misrouted, a customer sentiment score quietly inverted, a forecast that looks plausible but is wrong in the same direction every quarter. Without an orderly audit loop, you’ll never know.

Common Pitfalls: Where Smart Teams Waste Money

Pilot purgatory – Running six concurrent AI proofs-of-concept with no production integration.

The “black box” handoff – Letting AI make a recommendation without requiring a human to log a reason for override or acceptance.

Data debt denial – Believing your CRM is clean because no one has looked at the “Notes” field for 14 months.

Metric myopia – Measuring AI on accuracy alone instead of time-to-resolution or rework rate.

A Simple Framework: Orderly AI Integration

If you want AI to work for you instead of on you, follow this sequence:

Map before you automate – Document the current state of any process you intend to accelerate. Include exception paths.

Tag your truth – Identify which fields, decisions, or handoffs currently cause delays. Those are your AI insertion points.

Run a silent shadow – Let AI propose outputs for two weeks without acting on them. Compare against human decisions.

Build a feedback switch – Every AI suggestion must have a “correct / incorrect / unsure” toggle. Collect that signal.

Review weekly, not quarterly – AI drift is real. Your Monday morning review should include three AI output samples from the past seven days.

AI Hype vs. Practical AI Reality

Aspect | The Hype | The Reality |

|---|---|---|

Implementation timeline | “Plug and play in one week” | 4–12 weeks of data prep and workflow mapping before the first reliable output |

Employee impact | “AI replaces routine work” | AI replaces routine decisions that follow rules—but exposes every exception a human must now handle |

Accuracy expectation | “99% correct out of the box” | Often 70–85% on real-world messy data, requiring a human-in-the-loop design |

ROI measurement | “Time saved = money saved” | Time saved only counts if rework rate stays flat or drops. Otherwise, you’re just generating faster errors |

Integration effort | “Works with your existing stack” | Works with your stack’s APIs and data schemas, which are probably outdated |

Governance | “Built-in explainability” | You will build your own logging, override trails, and rollback procedures. No vendor ships those for you |

How to Measure AI ROI Without Fooling Yourself

Track three metrics, and three only, for the first 90 days:

Decision cycle time – From trigger to human-approved action.

Exception rate – How often the AI’s output is rejected or overridden.

Correction cost – Average minutes to fix an AI mistake vs. time to do the task manually.

If correction cost > manual time, stop. You’ve automated the wrong thing.

The Bottom Line for Leaders

AI effectiveness isn’t about choosing the right model. It’s about choosing the right process to accelerate—and building the feedback loops to catch when it drifts.

Your actionable steps starting tomorrow:

Pick one repetitive, rule-heavy task your team hates.

Write down every step and exception path (no AI yet).

Run that exact process with a simple LLM or classifier in shadow mode for one week.

Compare outcomes. Then decide whether to automate, adjust, or abandon.

Orderly Problem Solvers didn’t earn its name by chasing shiny objects. We earned it by fixing the inputs before touching the outputs. AI is a tool, not a savior. Use it like a scalpel—not a sledgehammer.

Ready to audit your AI readiness? Start with your data hygiene. Everything else is noise.